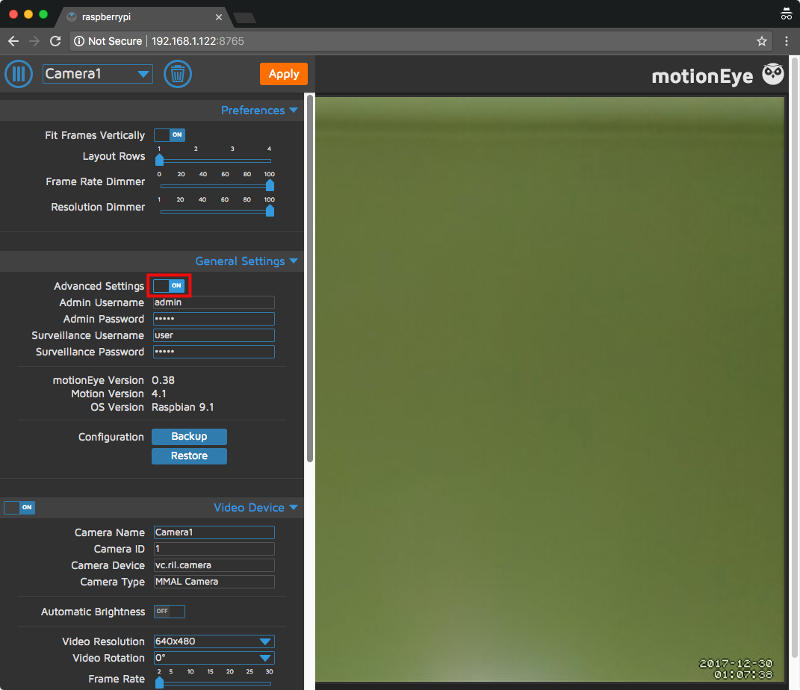

And then you move on to building your own infrastructure with those bricks (http API, live streaming, hl264 streaming, etc.). I found that motion web UI is now enough for live streaming of what motion "sees" but motionEyeOS helps understanding many of motion options.

It also introduces a lot of CPU bottlenecks and unreliable network connectivity. Most motionEyeOS tutorials I read are PoC that makes you install motionEyeOS on every pi and use the motion MJPEG stream instead of an h264 feed from the camera. Motion introduces too much latency to use as a two-ine-one "detect and live stream" (MJPEG conversion takes a lot of CPU clock and adds artifacts). you could also simply do motion detection on the pi4 but you are at the mercy of artifacts in the stream and they WILL trigger motion detection that's why you want to do motion detection closest to the source use a third connected pi4 for continuous recording of the live stream (in chunks of 5 minutes) to HDD/SSD and/or recording of the live feed once a motion is triggered (you can use motion hooks to trigger API on this pi from the pi0) use another pi0+camera or add an IR sensor to the first pi0, in the same spot, to detect motion events (either through motion installed on the pi or the IR sensor) use a pi0+camera dedicated to live streaming that's the one you want to connect to to see what's going on (fixed IP, RTSP stream) Here's my conclusion (I should write a blog post about it): I was toying with 2 pi0+cam and one pi4 some weeks ago. Since you use motion, I suppose you want to have some kind of detection going on.

None of the various cvlc incantations to do better actually seem to work on my pi zero w, etc. uv4l is closed source and not up to date with current OS versions. Motion is popular, but is limited to mjpeg rather than h.264. I've been trying to get this working lately and nothing works "well".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed